This article introduces OpenAI's latest video generation model, Sora2. Honestly, Wan2.5 just came out recently and I'm a bit overwhelmed, but Sora2 is too significant in the video generation model space to skip, so ~~I'm dragging myself~~ here to introduce it.

Model Overview

Sora2 is the second-generation model of OpenAI's video generation model Sora. The first-generation Sora ended disappointingly—after flashy demos, actually trying it out didn't meet expectations. However, this new model genuinely competes with Veo3 and Wan2.5. While Veo3 was the first audio-integrated model and Wan2.5 competed against Veo3 with improved live-action quality and reduced generation costs, Sora2 takes a somewhat different approach. Sora2 focuses on maintaining narrative consistency throughout the entire video. In terms of model performance, it's fair to say it's on par with Veo3 and Wan2.5.

Sora2's Characteristics

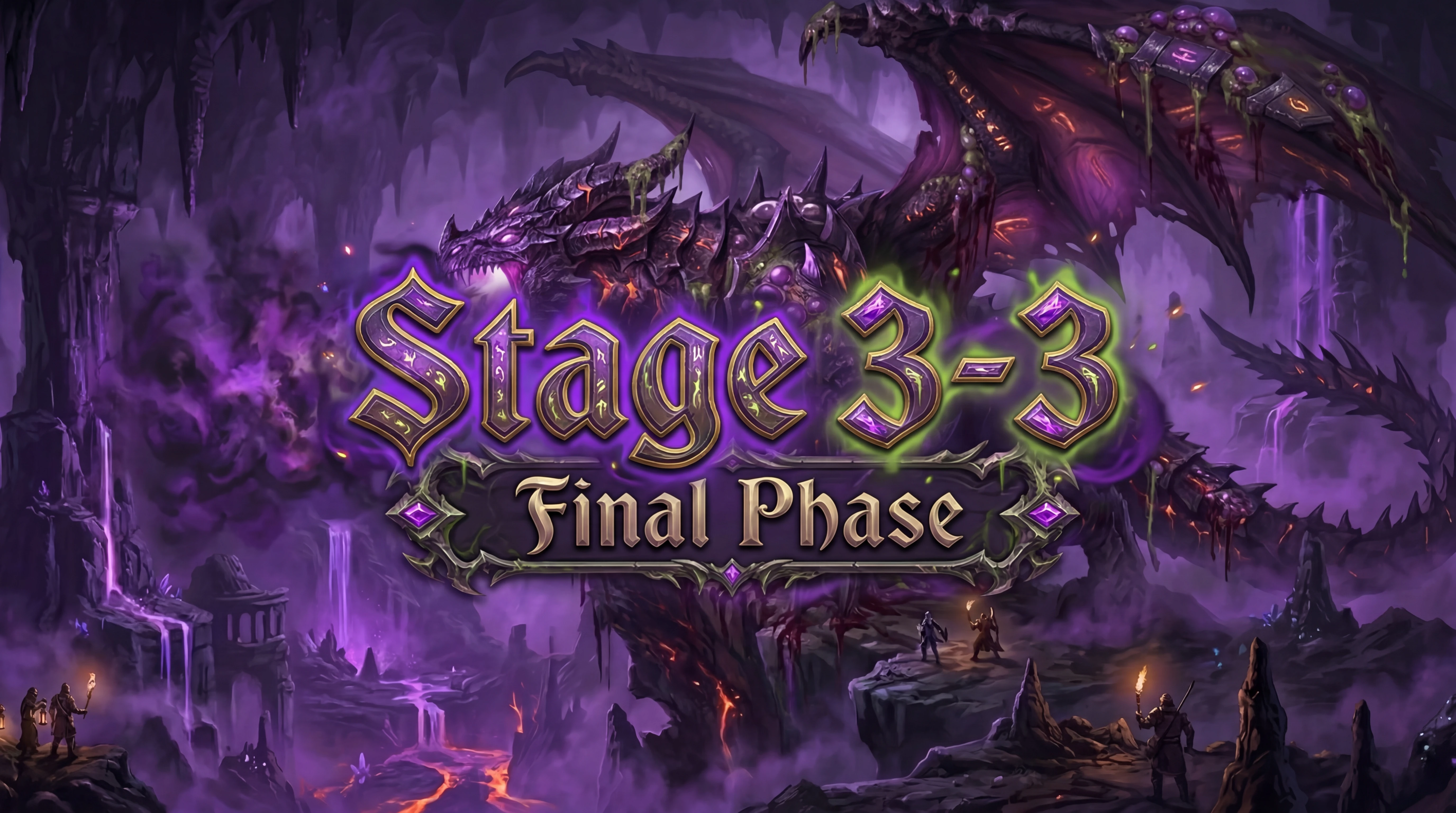

Sora2 has different strengths compared to Veo3 and Wan2.5. While Veo3 and Wan2.5 boast overwhelming quality in live-action videos, they tend to be relatively weak with anime-style content. Sora2 has extremely high reproduction accuracy and consistency with anime-style content, especially IP works. Of course, Twitter is noisy about copyright issues, but in terms of model performance, it's unmatched. On the other hand, it falls slightly behind Veo3 and Wan2.5 in live-action content. That said, the image quality isn't bad even for live-action, and if you use the Cameos feature, you can maintain a certain level of character consistency.

Overall, Sora2 is less prone to narrative breakdowns, and while Veo3 and Wan2.5 sometimes generate videos with questionable elements, Sora2 has a lower rate of such issues. The editing style and camera work are optimized for short-form videos in the default state—in short, it generates videos that could go viral on YouTube Shorts. Of course, you can control cuts and camera work through prompts.

Regarding audio, the quality is about the same as Wan2.5—it's not particularly bad. However, it's not as good as Veo3 either, though there's not a huge difference. That said, Sora2's focus is on reproduction accuracy. For IP work videos, it has considerable audio reproduction accuracy. Veo3 and Wan2.5 seem to miss the mark on this—they typically generate off-target audio for IP works. Whether it's T2V or I2V, they reproduce visuals well but audio poorly. In contrast, Sora2 boasts high reproduction accuracy for both visuals and audio. In fact, audio reproduction might even be better than visual reproduction.

Cameos Feature

When generating videos of people, Sora2 can use the Cameos feature to maintain character consistency. This can only be registered by authenticating real people. Apparently, this authentication is quite strict, and it doesn't work with AI-generated OCs and such. When generating live-action videos, if you're not using Cameos and are targeting a specific person, censorship kicks in. This is probably a measure against deepfakes, but it's problematic that censorship applies to live-action footage containing people. To make matters worse, the Cameos feature currently only works on the iOS app. I don't have an iOS-compatible body, so I can't try this—disappointing! iPhones and Macs have great hardware, but the OS is just too difficult to use. Well, I should stop dissing Apple before I get in trouble.

Comparison with Competing Models

This section compares Sora2 with competing models Veo3 and Wan2.5. First, all three models support integrated audio generation. Among the video models currently executable on SeaArt, only these three support integrated audio generation.

As for audio quality, if Veo3 is a 10, Wan2.5 would be an 8, and Sora2 a 7. However, this unfortunately isn't an accurate comparison. Wan was tuned with Veo in mind, making it easier to create similar video content for comparison, but Sora has completely different tuning from the other two models, making it difficult to create comparison videos in the first place. After several trial-and-error attempts at comparison, Veo3's audio quality still hasn't been surpassed. That said, the audio quality isn't bad, so it's acceptable. More importantly, Sora2 produces the most natural language pronunciation among the three models. I only understand English and Japanese so I can't say for certain, but even so, it has a naturalness that sets it apart from previous models.

Next, regarding image quality, all three models have comparable performance. Veo3 and Wan2.5 achieve excellent image quality especially in live-action content, but leave something to be desired in anime-style content. Sora2 has excellent reproduction accuracy in anime-style content but gives a lackluster impression in live-action.

Finally, prompt interpretation. There's a significant difference here. Veo3 and Wan2.5 generate videos faithful to the prompt. This allows you to enjoy videos exactly as you envision them by providing detailed prompt instructions. On the other hand, Sora2 partially ignores prompt inputs regarding video composition and cuts. I thought maybe my prompt writing was poor, but the same tendency was confirmed in other people's Sora2 videos as well. All videos output by Sora2 share characteristics similar to cuts and compositions common on YouTube Shorts and TikTok, and there were cases where it stubbornly ignored attempts to make it resemble Veo3 or Wan2.5 through prompt control. How obstinate. However, thinking inversely, since you can create multiple TikTok-oriented videos with simple prompts, it might be suitable for preparing multiple attempts to go viral.

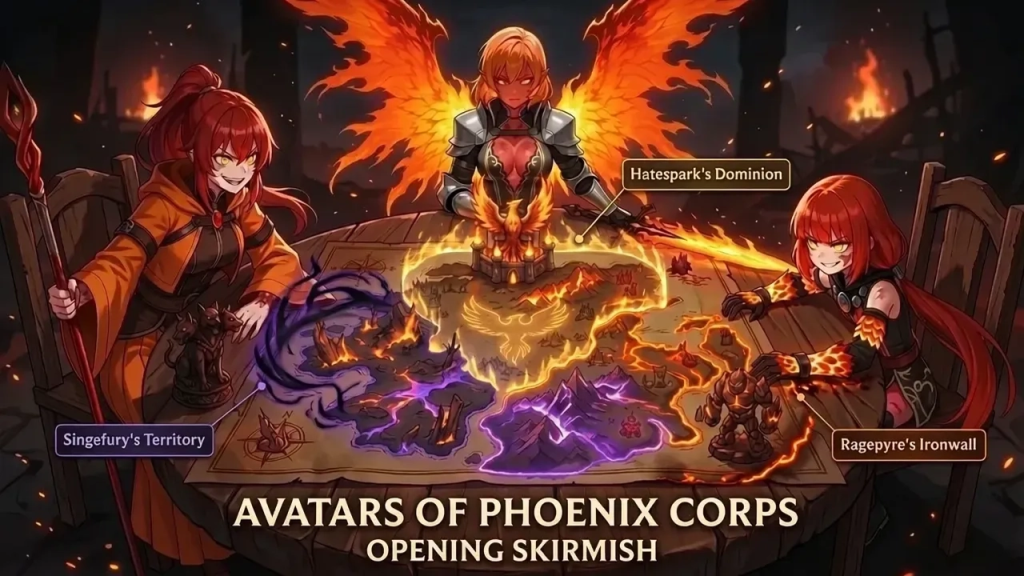

Sora as a Platform

With the release of Sora2, OpenAI announced the social app Sora. The name is confusing since it's the same, but essentially you can share videos generated with model Sora2 on platform Sora. This platform adopts a vertical scroll UI very similar to TikTok, promoting user influx from TikTok, YouTube Shorts, Instagram Reels, and similar platforms. This suggests purposes such as OpenAI's new management strategy, user demand research, and breaking free from distribution dependence on other companies like Google.

Reconsidering the video generation model Sora2 from this perspective, you can understand why Sora2 emphasized narrative consistency. In reality, with OpenAI's technical capabilities and computational resources, tuning Sora2 to be a complete superior version of Veo3 wouldn't have been impossible. Yet they gave Sora2 strong narrative consistency even at the cost of some photorealism, likely considering submissions to the social app Sora.

Of course, this is just my speculation, but thinking this way makes everything make sense. It makes sense that Sora2 has overwhelming reproduction accuracy for IP works, that it includes the unique Cameos feature, and that it generates 9:16 vertical videos in the default state. Whether Sora will succeed is unknown, but I think it's an interesting initiative. Well, looking at Bluesky and Threads, I don't have much hope for social apps competing with existing platforms succeeding, but since Sora targets generated content, who knows how it'll turn out.

IP Copyright Issues

What stirred up Twitter immediately after Sora2's release was the IP copyright issue. Personally, I think Japanese IP should be more open, but Japanese users seem to love copyright, and apparently numerous feedback messages were sent to OpenAI. Perhaps the risk of ignoring them was too high, as Sora2 now censors generation of major IP content. Not only strictly copyrighted properties like Disney but also more lenient IPs are similarly censored. I think the most interesting use of Sora2 is reproducing IP works, but having such a high probability of censorship is unacceptable. Such an excellent model is being wasted. It's fine if you can't post or download, but at least let the user who generated it see it.

I have no idea what the future holds for IP issues, but if regulations remain as they are, this excellent model will sink. Whether IP-side copyright terms will change or whether IPs with looser terms will rise and reshape the market is completely unpredictable. My personal prediction is that generative AI promotes democratization of creation, so individual OCs may hold high value in the future. But what do I know.