Type

Checkpoint

Temps de Publication

2024-08-20

Modèle Basique

Flux.1 S

Introduction de version

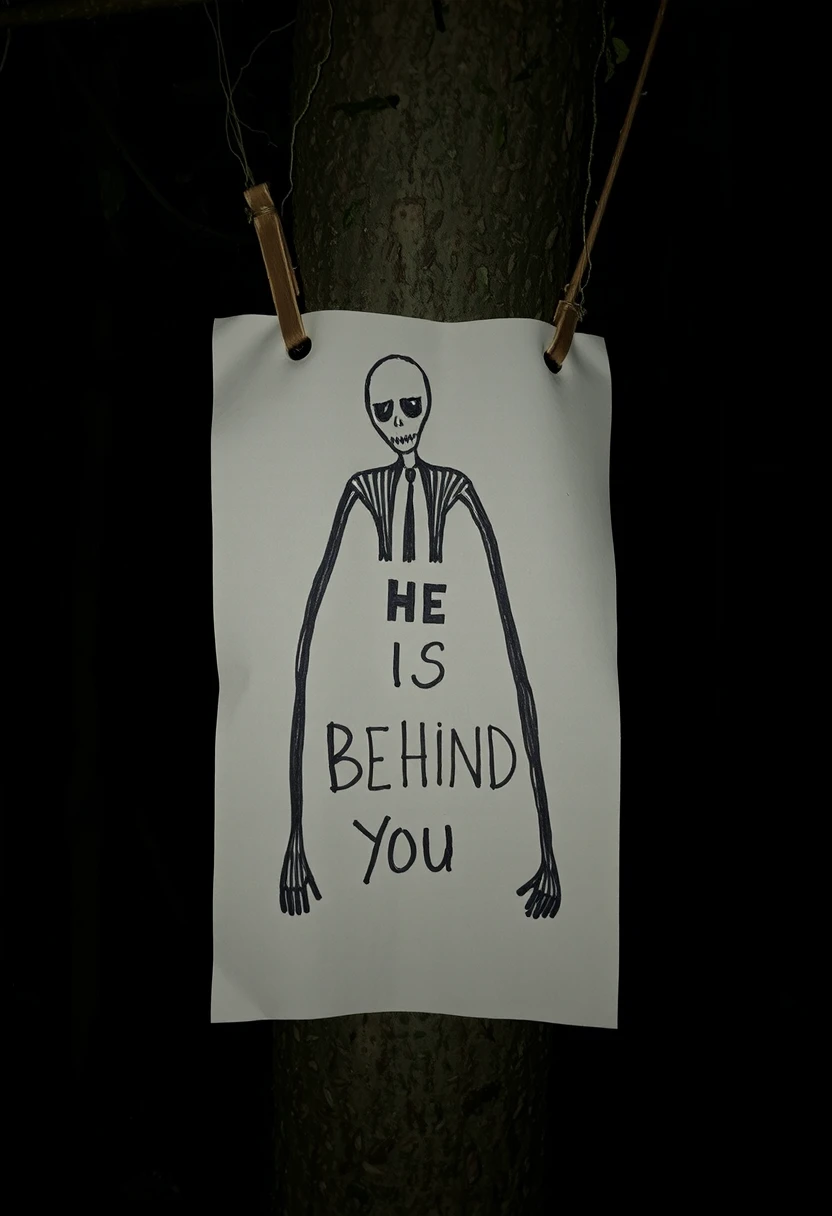

I created the original (v1) from an fp8 checkpoint. Due to double quantization it accumulated more error. So I found that v1 couldn't produce sharp images. For v2 I manually merged the bf16 Dev and Schnell checkpoints and then made the GGUF. This version can produce more details and much more crisper results.