Learning Club

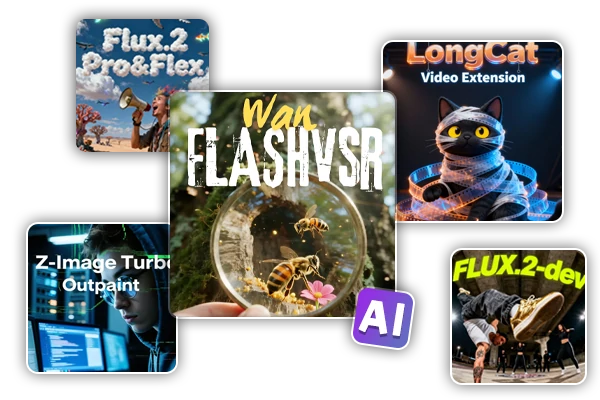

Featured Workflows

Wan Video

New Pick

Selamat datang di SeaArt AI Workflow

Sederhanakan proses kreatif Anda dengan workflow generator seni AI dari SeaArt, yang dirancang untuk memenuhi berbagai kebutuhan seniman, desainer, dan kreatif. Dari gambar AI hingga video AI, SeaArt AI menawarkan segala yang Anda butuhkan untuk mewujudkan visi artistik Anda.

Mengapa Menggunakan ComfyUI Workflow di SeaArt AI?

Antarmuka yang Sederhana

SeaArt AI menyediakan antarmuka intuitif yang memudahkan pengaturan workflow. Semua workflow dirancang untuk semua orang, bahkan jika Anda tidak memiliki keahlian dalam pemrograman.

Workflow yang Dapat Disesuaikan

Desain workflow Anda sesuai keinginan. Dari pelatihan LoRA tingkat lanjut hingga pembuatan gambar dari teks yang rumit, setiap langkah dapat disesuaikan untuk memenuhi kebutuhan Anda.

Efisiensi Tinggi

SeaArt mengoptimalkan proses pembuatan seni AI. Nikmati waktu render yang lebih cepat dan lebih sedikit hambatan teknis. Buat visual yang menakjubkan dengan cepat.

Ribuan Workflow untuk Pembuatan Seni AI

Buka visi artistik Anda dengan SeaArt Workflow. Akses ribuan workflow yang telah disetel sebelumnya untuk menghasilkan seni AI dengan mudah dalam format seperti teks-ke-gambar, gambar-ke-gambar, dan gambar-ke-video. Workflow ini terintegrasi dengan model AI yang kuat seperti Flux, SD 3.5, dan opsi populer lainnya, termasuk ControlNet, memberi Anda fleksibilitas untuk menciptakan visual yang menakjubkan sesuai preferensi Anda.

Kontrol Penuh dengan Workflow yang Dapat Disesuaikan

Dengan SeaArt Workflow, Anda memiliki kendali penuh atas proses pembuatan Anda. Kami menawarkan opsi kustomisasi yang kuat yang memungkinkan Anda menyesuaikan workflow sesuai kebutuhan spesifik Anda. Sesuaikan parameter, ubah model AI, dan sesuaikan pengaturan untuk memastikan hasil akhir sesuai dengan visi Anda.

Pertanyaan Umum

Apa itu ComfyUI Workflow?

SeaArt AI’s Workflow adalah alat inovatif yang melampaui penggunaan perintah teks sederhana. Berbeda dengan generator seni AI tradisional, SeaArt menawarkan sistem workflow visual, di mana Anda dapat membangun workflow kustom untuk mengontrol proses pembuatan gambar dan video dengan presisi yang mendetail.

Jenis seni AI apa yang dapat saya buat dengan workflow?

Workflow ini memungkinkan Anda untuk membuat berbagai jenis seni AI dengan mudah, termasuk potret realistis, pemandangan fantasi, karakter anime, dan karya abstrak. Anda dapat dengan mudah membuat teks-ke-gambar, gambar-ke-gambar, dan gambar-ke-video, serta menerapkan transfer gaya, dan bahkan membuat model 3D.

Apakah ComfyUI Workflow cocok untuk pemula?

Ya! Dengan antarmuka drag-and-drop yang ramah pengguna dan pratinjau waktu nyata, SeaArt Workflow dapat diakses oleh pemula maupun pengguna tingkat lanjut, menjadikannya mudah untuk menciptakan seni AI.

Bisakah saya menyesuaikan workflow saya?

Ya. SeaArt AI menawarkan berbagai pengaturan yang dapat disesuaikan yang memungkinkan Anda mengatur workflow sesuai dengan kebutuhan proyek spesifik Anda.