러닝클럽

추천 워크플로우

Wan Video

새로운 픽

왜 SeaArt AI에서 ComfyUI 워크플로우를 사용해야 할까요?

간단한 인터페이스

SeaArt AI는 직관적인 인터페이스를 제공하여 워크플로우 설정을 쉽게 만듭니다. 모든 워크플로우는 코딩 경험이 없어도 누구나 사용할 수 있도록 설계되었습니다.

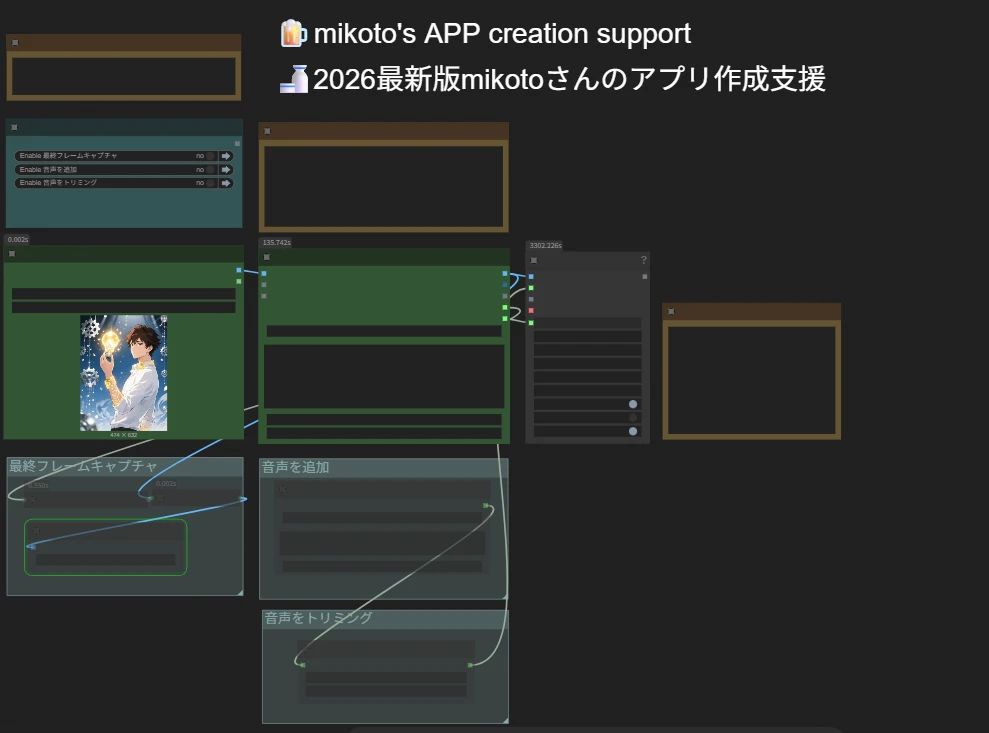

맞춤형 워크플로우

여러분만의 방식으로 워크플로우를 설계하세요. 고급 LoRA 훈련부터 정교한 텍스트-이미지 생성까지 모든 단계를 필요에 맞게 조정할 수 있습니다.

높은 효율성

SeaArt는 AI 아트 제작 과정을 최적화합니다. 더 빠른 렌더링 시간과 적은 기술적 장애를 경험하며, 멋진 비주얼을 신속하게 제작하세요.

FAQs

ComfyUI 워크플로우란 무엇인가요?

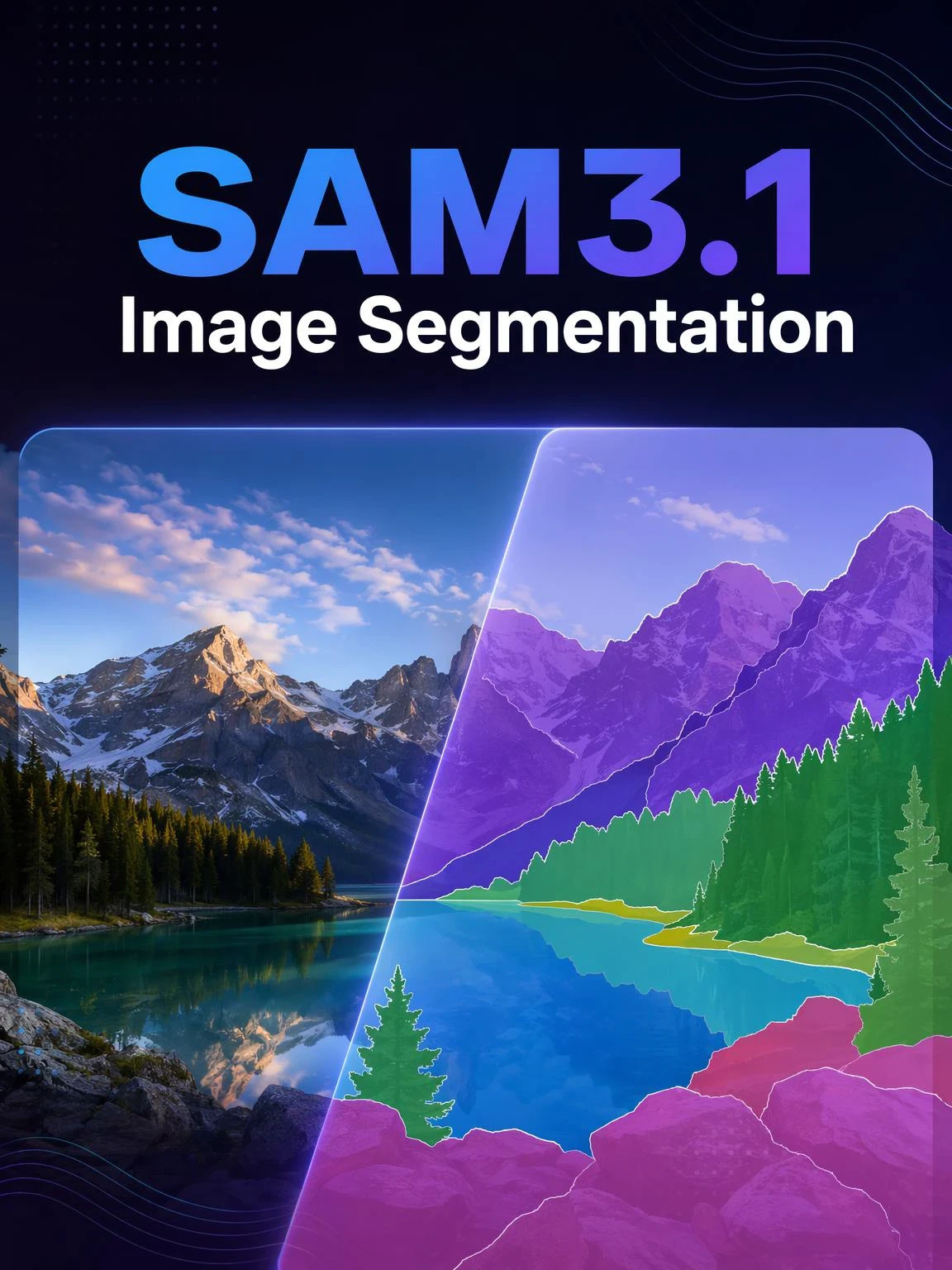

SeaArt AI의 워크플로우는 단순한 텍스트 프롬프트를 넘어선 혁신적인 도구입니다. 기존의 AI 아트 생성기와 달리 SeaArt는 시각적 워크플로우 시스템을 제공하여 이미지 및 비디오 생성 과정을 세밀하게 제어할 수 있는 맞춤형 워크플로우를 제작할 수 있습니다.

워크플로우로 어떤 종류의 AI 아트를 생성할 수 있나요?

이 워크플로우를 사용하면 사실적인 초상화, 판타지 풍경, 애니메이션 캐릭터, 추상 작품 등 다양한 AI 아트를 손쉽게 생성할 수 있습니다. 텍스트-이미지, 이미지-이미지, 이미지-비디오 생성은 물론, 스타일 전환을 적용하거나 심지어 3D 모델을 생성할 수도 있습니다.

ComfyUI 워크플로우는 초보자에게 적합한가요?

네! SeaArt의 사용자 친화적인 드래그 앤 드롭 인터페이스와 실시간 미리보기 기능 덕분에, 초보자와 고급 사용자 모두 쉽게 사용할 수 있어 AI 아트 제작이 단순해집니다.

워크플로우를 커스터마이징할 수 있나요?

네. SeaArt AI는 다양한 커스터마이징 설정을 제공하여 프로젝트 요구에 맞게 워크플로우를 설정할 수 있도록 지원합니다.