Öğrenme Kulübü

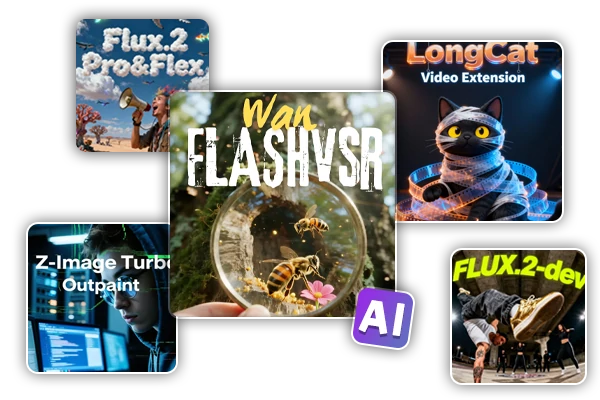

Öne Çıkan İş Akışları

Wan Video

Yeni Seçim

SeaArt AI Workflow’a Hoş Geldiniz

SeaArt'ın AI sanat yaratıcı workflow’ları ile yaratıcı sürecinizi basitleştirin. Sanatçılar, tasarımcılar ve yaratıcılar için tasarlanmış bu workflow’lar, AI görsellerinden AI videolarına kadar geniş bir yelpazede hizmet sunuyor. SeaArt AI, sanatsal vizyonunuzu hayata geçirmek için ihtiyacınız olan her şeye sahiptir.

SeaArt AI’de ComfyUI Workflow’u Neden Kullanmalısınız?

Basit Arayüz

SeaArt AI, workflow’ları yapılandırmayı kolaylaştıran sezgisel bir arayüz sunar. Tüm workflow’lar, kodlama bilgisi olmayanlar dahil herkes için tasarlanmıştır.

Özelleştirilebilir Workflow’lar

Workflow’unuzu istediğiniz şekilde tasarlayın. Gelişmiş LoRA eğitiminden karmaşık metinden görsele oluşturma işlemine kadar her adım, ihtiyaçlarınıza göre ayarlanabilir.

Yüksek Verimlilik

SeaArt, AI sanat yaratım süreçlerini optimize eder. Daha hızlı render sürelerinin ve daha az teknik engelin tadını çıkarın. Hızla çarpıcı görseller üretin.

AI Sanatı Yaratmak İçin Binlerce Workflow

SeaArt Workflow ile sanatsal vizyonunuzu açığa çıkarın. metinden-görsele, görselden-görsele ve görselden-videoya gibi formatlarda AI sanatı oluşturmak için binlerce önceden ayarlanmış workflow’a erişin. Bu workflow’lar, Flux, SD 3.5 gibi güçlü AI modelleri ve ControlNet gibi popüler seçeneklerle entegre olur ve tercihlerinize göre çarpıcı görseller oluşturmanıza olanak tanır.

Özelleştirilebilir Workflow’larla Tam Kontrol

SeaArt Workflow ile oluşturma süreciniz üzerinde tam kontrole sahip olursunuz. İhtiyaçlarınıza özel workflow’lar tasarlamak için güçlü özelleştirme seçenekleri sunuyoruz. Parametreleri ayarlayın, AI modellerini değiştirin ve nihai çıktının vizyonunuza uygun olmasını sağlamak için ayarları ince ayar yapın.

Sıkça Sorulan Sorular

ComfyUI Workflow Nedir?

SeaArt AI Workflow, basit metin istemlerinin ötesine geçen yenilikçi bir araçtır. Geleneksel AI sanat yaratıcılarından farklı olarak, SeaArt, resim ve video oluşturma sürecini ayrıntılı bir hassasiyetle kontrol etmek için özel workflow'lar oluşturmanıza olanak tanır.

Hangi türde AI sanatlarını workflow kullanarak oluşturabilirim?

Bu workflow'lar, gerçekçi portrelerden fantezi manzaralarına, anime karakterlerinden soyut yaratımlara kadar geniş bir AI sanatı yelpazesi oluşturmanıza olanak tanır. Metinden-görsele, görselden-görsele ve görselden-videoya kolayca oluşturabilir, stil transferleri uygulayabilir ve hatta 3D modeller oluşturabilirsiniz.

ComfyUI Workflow, yeni başlayanlar için uygun mu?

Evet! Kullanıcı dostu sürükle ve bırak arayüzümüz ve gerçek zamanlı önizlemeler ile SeaArt’ın Workflow'u, hem yeni başlayanlar hem de ileri düzey kullanıcılar için erişilebilir olup, AI sanatı yaratmayı basit hale getiriyor.

Workflow’umuzu özelleştirebilir miyim?

Evet. SeaArt AI, proje ihtiyaçlarınıza göre workflow'unuzu ayarlamanızı sağlayacak çeşitli özelleştirme ayarları sunar.