Câu lạc bộ Học tập

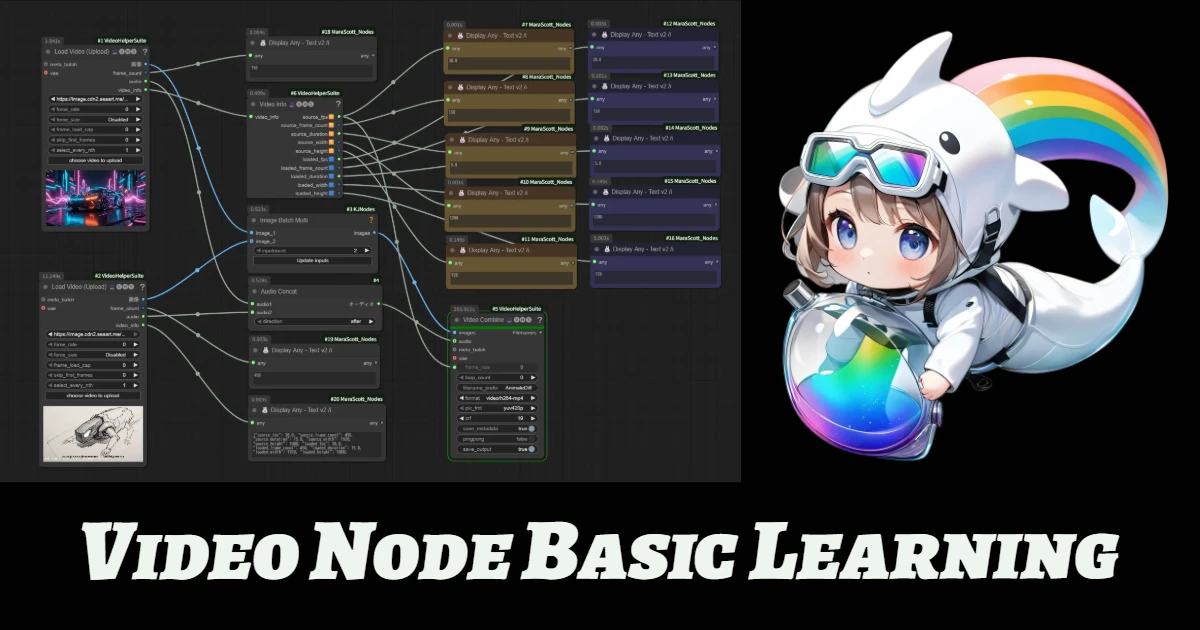

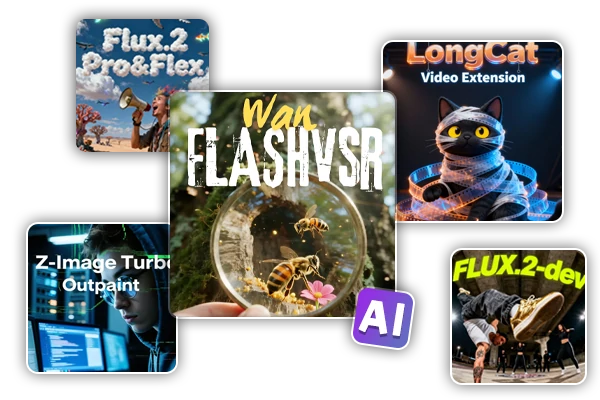

Quy trình làm việc nổi bật

Wan Video

Lựa chọn mới

Chào Mừng đến với SeaArt AI Workflow

Đơn giản hóa quy trình sáng tạo của bạn với các workflow của công cụ tạo nghệ thuật AI từ SeaArt, được thiết kế để đáp ứng nhu cầu đa dạng của các nghệ sĩ, nhà thiết kế và người sáng tạo. Từ hình ảnh AI đến video AI, SeaArt AI cung cấp mọi thứ bạn cần để biến tầm nhìn nghệ thuật của bạn thành hiện thực.

Tại sao nên sử dụng ComfyUI Workflow trên SeaArt AI?

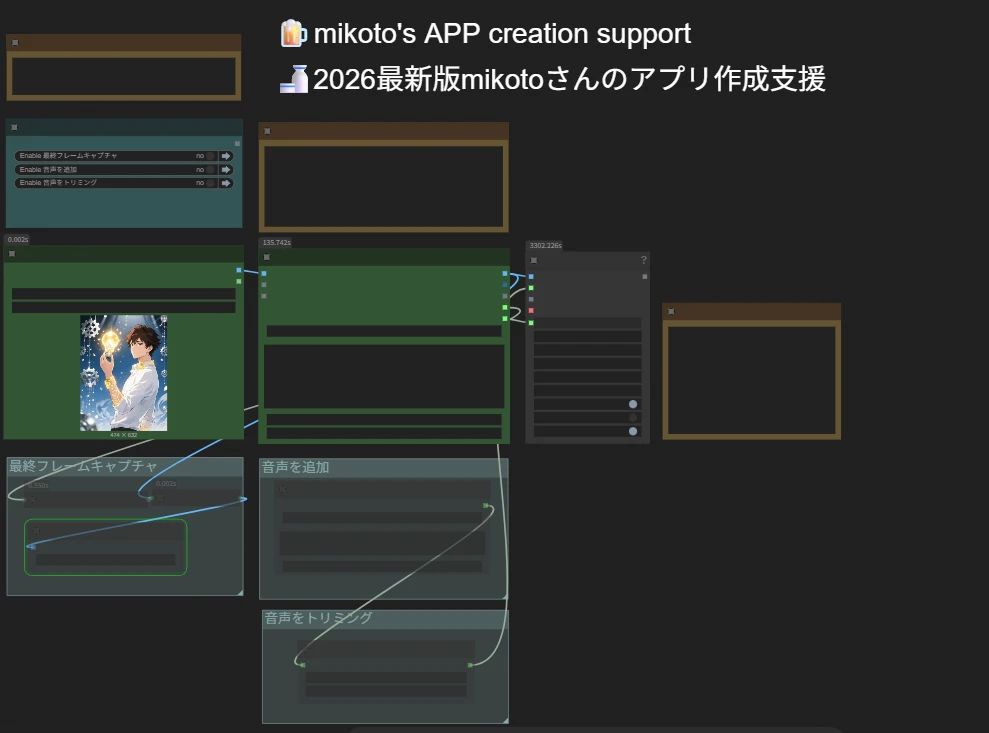

Giao Diện Đơn Giản

SeaArt AI cung cấp một giao diện trực quan giúp việc cấu hình các workflow trở nên dễ dàng. Tất cả các workflow đều được xây dựng dành cho mọi người, ngay cả khi bạn không có kinh nghiệm lập trình.

Workflow Có Thể Tùy Chỉnh

Thiết kế workflow của bạn theo cách bạn muốn. Từ huấn luyện LoRA nâng cao đến tạo hình ảnh từ văn bản phức tạp, mỗi bước đều có thể điều chỉnh để đáp ứng nhu cầu của bạn.

Hiệu Suất Cao

SeaArt tối ưu hóa các quy trình tạo nghệ thuật AI. Tận hưởng thời gian render nhanh hơn và ít rào cản kỹ thuật hơn. Tạo ra những hình ảnh ấn tượng một cách nhanh chóng.

Hàng Nghìn Workflow cho Việc Tạo Nghệ Thuật AI

Mở khóa tầm nhìn nghệ thuật của bạn với SeaArt Workflow. Truy cập hàng nghìn workflow đã được cài đặt sẵn để tạo ra nghệ thuật AI một cách dễ dàng trong các định dạng như văn bản thành hình ảnh, hình ảnh thành hình ảnh, và hình ảnh thành video. Các workflow này tích hợp với các mô hình AI mạnh mẽ như Flux, SD 3.5 và các tùy chọn phổ biến khác, bao gồm cả ControlNet, mang đến cho bạn sự linh hoạt để tạo ra hình ảnh ấn tượng theo sở thích của mình.

Hoàn Toàn Kiểm Soát với Workflow Tùy Chỉnh

Với SeaArt Workflow, bạn có toàn quyền kiểm soát quá trình tạo ra của mình. Chúng tôi cung cấp các tùy chọn tùy chỉnh mạnh mẽ cho phép bạn điều chỉnh workflow theo nhu cầu cụ thể của mình. Điều chỉnh các tham số, thay đổi mô hình AI, và tinh chỉnh các cài đặt để đảm bảo kết quả cuối cùng đáp ứng tầm nhìn của bạn.

Câu Hỏi Thường Gặp

ComfyUI Workflow là gì?

SeaArt AI’s Workflow là một công cụ sáng tạo vượt qua những prompt văn bản đơn giản. Khác với các công cụ tạo nghệ thuật AI truyền thống, SeaArt cung cấp một hệ thống workflow hình ảnh, nơi bạn có thể tạo các workflow tùy chỉnh để kiểm soát quá trình tạo hình ảnh và video với độ chính xác chi tiết.

Các loại nghệ thuật AI nào tôi có thể tạo ra với workflow?

Các workflow này giúp bạn dễ dàng tạo ra nhiều loại nghệ thuật AI, bao gồm chân dung thực tế, phong cảnh giả tưởng, nhân vật anime và các sáng tạo trừu tượng. Bạn có thể dễ dàng tạo ra văn bản thành hình ảnh, hình ảnh thành hình ảnh, và hình ảnh thành video, cũng như áp dụng chuyển đổi phong cách và thậm chí tạo ra các mô hình 3D.

ComfyUI Workflow có phù hợp cho người mới bắt đầu không?

Có! Với giao diện kéo và thả dễ sử dụng và các bản xem trước thời gian thực, SeaArt Workflow dễ tiếp cận cho cả người mới bắt đầu và người dùng nâng cao, giúp việc tạo nghệ thuật AI trở nên đơn giản.

Tôi có thể tùy chỉnh workflow của mình không?

Có. SeaArt AI cung cấp các cài đặt tùy chỉnh khác nhau cho phép bạn thiết lập workflow theo nhu cầu cụ thể của dự án.