نادي التعلم

تيارات العمل المميزة

Wan Video

اختيار جديد

مرحبا بكم في تيار العمل من SeaArt AI

بسط عمليتك الإبداعية باستخدام تيارات عمل مولد فن الـAI من SeaArt، والتي صُنعت لتلبية الاحتياجات المتنوعة للفنانين والمصممين والمبدعين. من صور الـAI إلى فيديوهات الـAI، تقدم SeaArt AI كل ما تحتاجه لتحقيق رؤيتك الفنية.

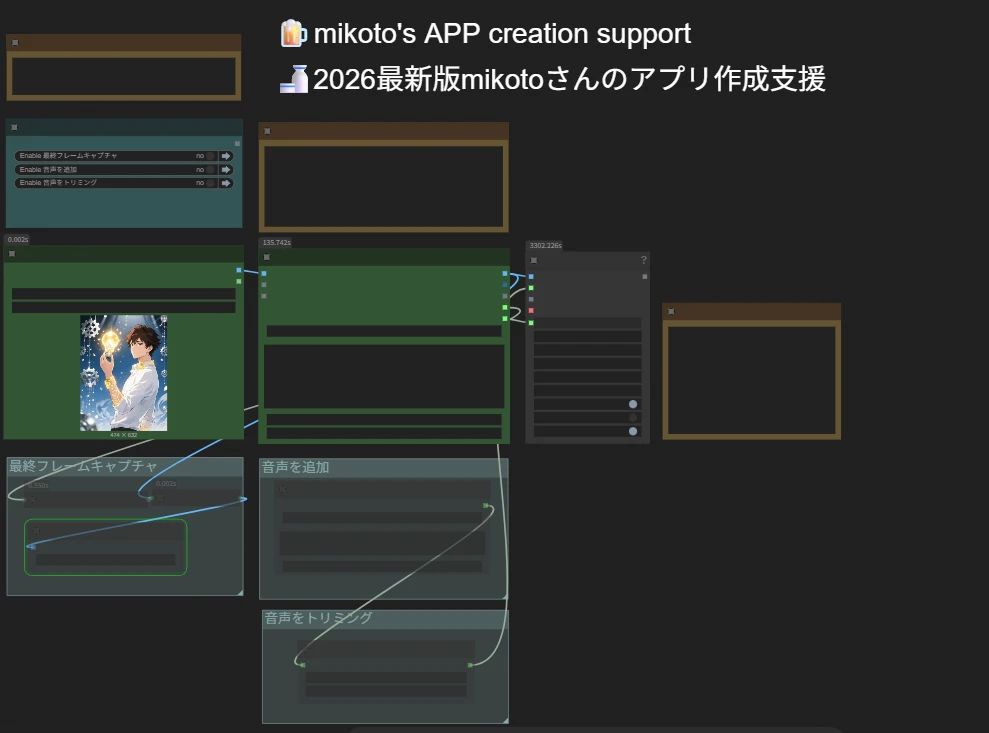

لماذا استخدام تيار العمل لـComfyUI على SeaArt AI؟

واجهة بسيطة

يوفر SeaArt AI واجهة بديهية تجعل تكوين تيارات العمل سهلا للغاية. جميع تيارات العمل مصممة خصوصا للجميع، حتى إذا لم تكن لديك خبرة في البرمجة.

تيارات العمل القابلة التخصيص

صمم تيار عملك بطريقتك الخاصة. من تدريب LoRA المتقدم إلى توليد معقد النص إلى الصورة، كل خطوة قابل التعديل لتلبية احتياجاتك.

كفاءة عالية

يعمل SeaArt على تحسين عمليات إنشاء فن الـAI. استمتع بأوقات تصيير أسرع وعقبات تقنية أقل. أنشئ مرئيات مذهلة بسرعة.

آلاف من تيارات العمل لإنشاء فن الـAI

افتح رؤيتك الفنية باستخدام تيار عمل SeaArt. صل إلى آلاف من تيارات العمل المضبوطة المسبقة لتوليد فن الـAI بسهولة بصيغ مثل النص إلى الصورة، الصورة إلى الصورة، والصورة إلى الفيديو. تتكامل تيارات العمل هذه مع نماذج الـAI القوية مثل Flux وSD 3.5 وغيرها من الخيارات الشعبية، بما في ذلك ControlNet، مما يمنحك المرونة لإنشاء مرئيات مذهلة تناسب تفضيلاتك.

التحكم الكامل باستخدام تيارات العمل القابلة التخصيص

بمساعدة تيار عمل SeaArt، تتمتع بالتحكم الكامل في عملية التوليد الخاصة بك. نحن نقدم خيارات تخصيص قوية تسمح لك بتخصيص تيارات العمل وفقا لاحتياجاتك المحددة. اضبط المعلمات، غيّر نماذج الـAI، والف الإعدادات دقيقا لضمان أن المخرج النهائي يطابق رؤيتك.

الأسئلة الشائعة

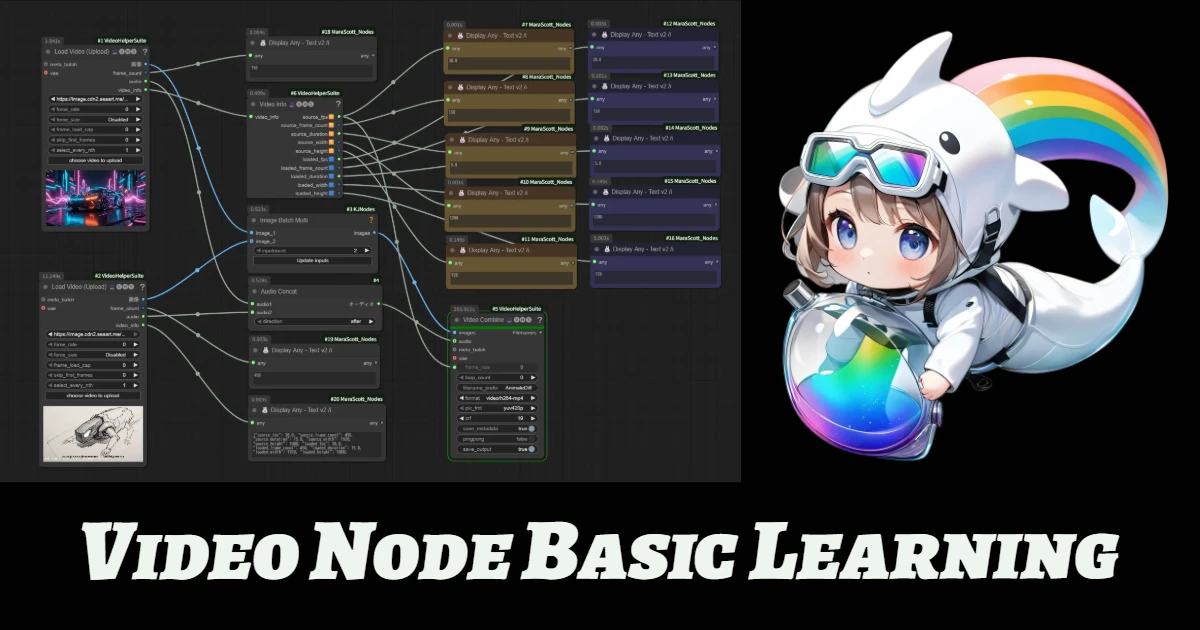

ما هو تيار عمل ComfyUI؟

تيار العمل من SeaArt AI هو أداة مبتكرة تتجاوز تعليمات نصية بسيطة. على عكس مولدات فن الـAI التقليدية، يقدم SeaArt نظام تيار العمل البصري، حيث يمكنك بناء تيارات العمل المخصصة للتحكم في عملية توليد الصورة والفيديو بدقة محببة.

أي نوع من فن الـAI يمكنني استخدام تيارات العمل لتوليده؟

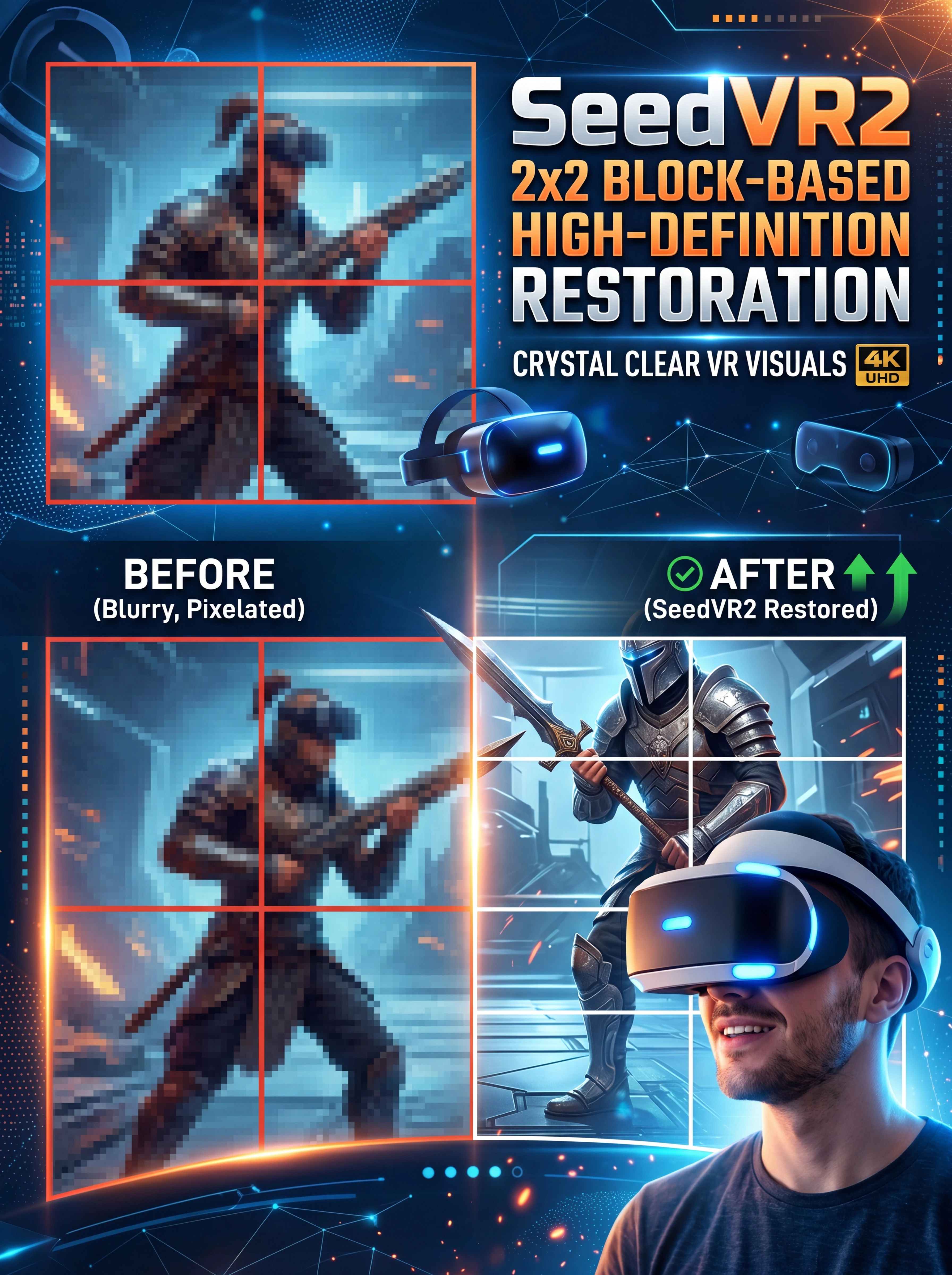

تسمح تيارات العمل هذه بإنشاء مجموعة واسعة من فن الـAI بسهولة، بما في ذلك التصويرات الشخصية الواقعية، المناظر الطبيعية الخيالية، شخصيات الأنمي، والإبداعات التجريدية. يمكنك إنشاء النص إلى الصورة، الصورة إلى الصورة، والصورة إلى الفيديو بسهولة، بالإضافة إلى تطبيق نقلات الأسلوب، وحتى توليد نماذج ثلاثية الأبعاد.

هل تيار عمل ComfyUI مناسب للمبتدئين؟

نعم! بفضل واجهة السحب والإفلات الودية الاستخدام والمعاينات في الوقت الحقيقي، فإن تيار العمل من SeaArt مناسب للمستخدمين المبتدئين والمتقدمين على حد سواء، مما يجعل تبسيط إنشاء فن الـAI.

هل يمكنني تخصيص تيار العمل الخاص بي؟

نعم. يقدم SeaArt AI إعدادات مخصصة متنوعة تسمح لك بضبط تيار العمل وفقا لاحتياجات مشروعك المحددة.