Club de Aprendizaje

Flujos de trabajo destacados

Wan Video

Nueva Selección

Bienvenido al Workflow de SeaArt AI

Simplifica tu proceso creativo con los flujos de trabajo generadores de arte AI de SeaArt, diseñados para satisfacer las diversas necesidades de artistas, diseñadores y creativos. Desde imágenes AI hasta videos AI, SeaArt AI ofrece todo lo que necesitas para dar vida a tu visión artística.

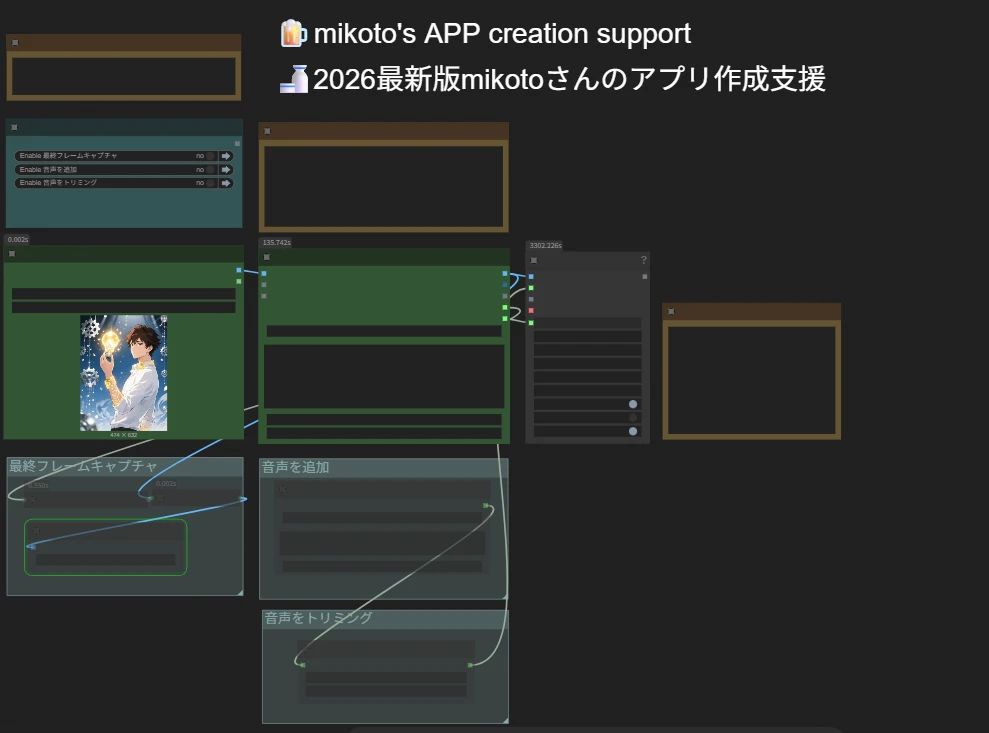

¿Por qué usar el ComfyUI Workflow en SeaArt AI?

Interfaz simple

SeaArt AI proporciona una interfaz intuitiva que hace que configurar los flujos de trabajo sea muy fácil. Todos los flujos de trabajo están construidos para todos, incluso si no tienes experiencia en codificación.

Flujos de trabajo personalizables

Diseña tu flujo de trabajo a tu manera. Desde entrenamiento avanzado LoRA hasta la compleja generación de texto-a-imagen, cada paso es ajustable para satisfacer tus necesidades.

Alta eficiencia

SeaArt optimiza los procesos de creación de arte AI. Disfruta de tiempos de renderizado más rápidos y menos obstáculos técnicos. Produce visuales impresionantes rápidamente.

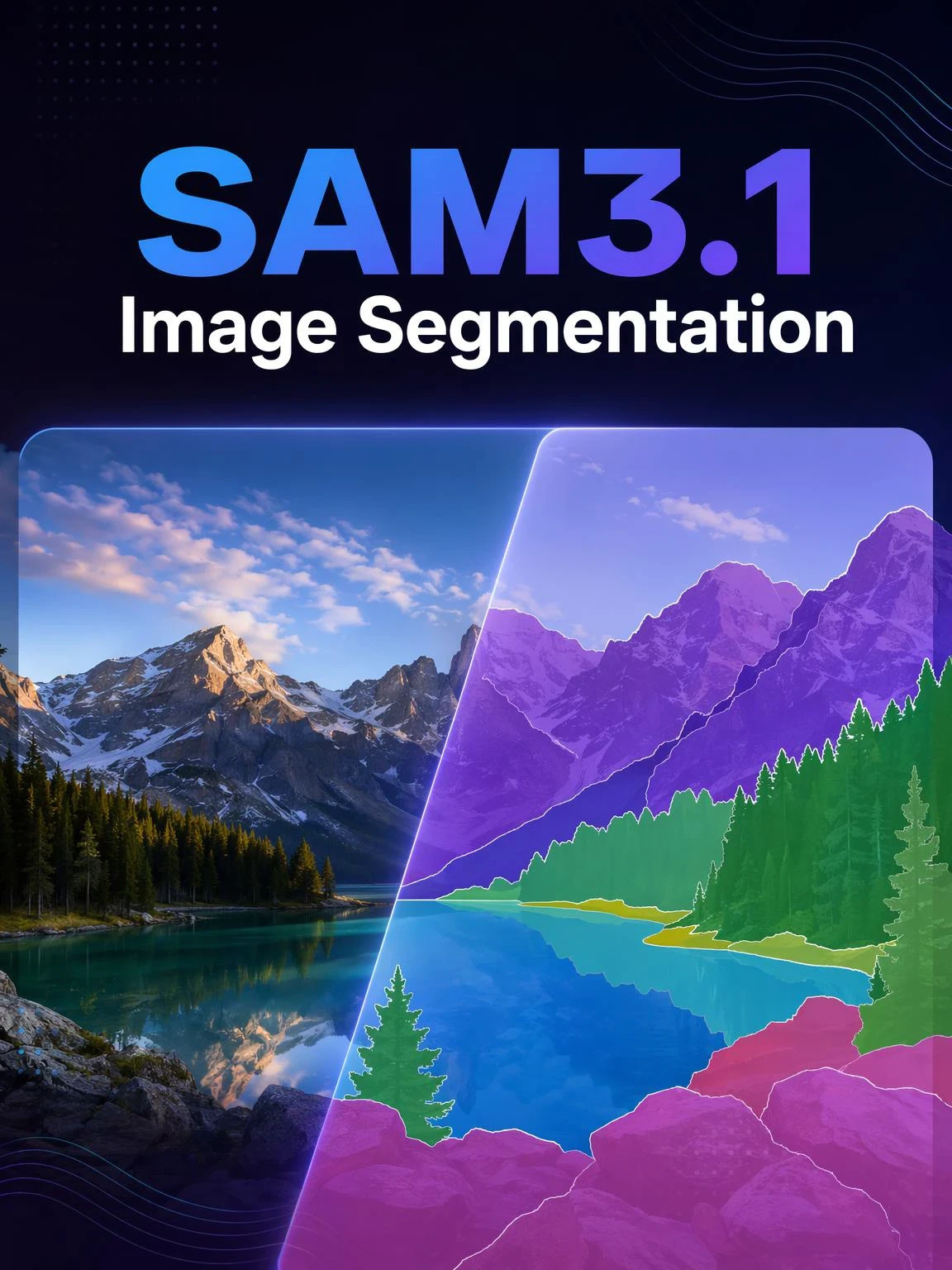

Miles de flujos de trabajo para la creación de arte AI

Desbloquea tu visión artística con SeaArt Workflow. Accede a miles de flujos de trabajo preconfigurados para generar arte AI de manera sencilla en formatos como texto-a-imagen, imagen-a-imagen e imagen-a-video. Estos flujos de trabajo se integran con poderosos modelos AI como Flux, SD 3.5 y otras opciones populares, incluido ControlNet, dándote la flexibilidad de crear visuales impresionantes que se ajusten a tus preferencias.

Control total con flujos de trabajo personalizables

Con SeaArt Workflow, tienes control total sobre tu proceso de generación. Ofrecemos potentes opciones de personalización que te permiten adaptar los flujos de trabajo a tus necesidades específicas. Ajusta los parámetros, cambia los modelos AI y ajusta la configuración para asegurarte de que el resultado final cumpla con tu visión.

Preguntas frecuentes

¿Qué es el ComfyUI Workflow?

El Workflow de SeaArt AI es una herramienta innovadora que va más allá de los simples prompts de texto. A diferencia de los generadores tradicionales de arte AI, SeaArt ofrece un sistema de flujo de trabajo visual, donde puedes construir flujos de trabajo personalizados para controlar el proceso de generación de imágenes y videos con una precisión granular.

¿Qué tipos de arte AI puedo generar con los flujos de trabajo?

Estos flujos de trabajo te permiten crear fácilmente una amplia gama de arte AI, incluyendo retratos realistas, paisajes fantásticos, personajes de anime y creaciones abstractas. Puedes crear sin esfuerzo texto-a-imagen, imagen-a-imagen e imagen-a-video, así como aplicar transferencias de estilo e incluso generar modelos 3D.

¿Es adecuado el ComfyUI Workflow para principiantes?

¡Sí! Con nuestra interfaz fácil de usar de arrastrar y soltar y las vistas previas en tiempo real, el Workflow de SeaArt es accesible tanto para principiantes como para usuarios avanzados, haciendo que la creación de arte AI sea simple.

¿Puedo personalizar mi flujo de trabajo?

Sí. SeaArt AI ofrece varias configuraciones personalizables que te permiten ajustar tu flujo de trabajo según las necesidades específicas de tu proyecto.