研习社

精选工作流

Wan Video

新精选

挑战活动

基础

视频生成

音频生成

3D生成

FLUX

风格

设计

摄影

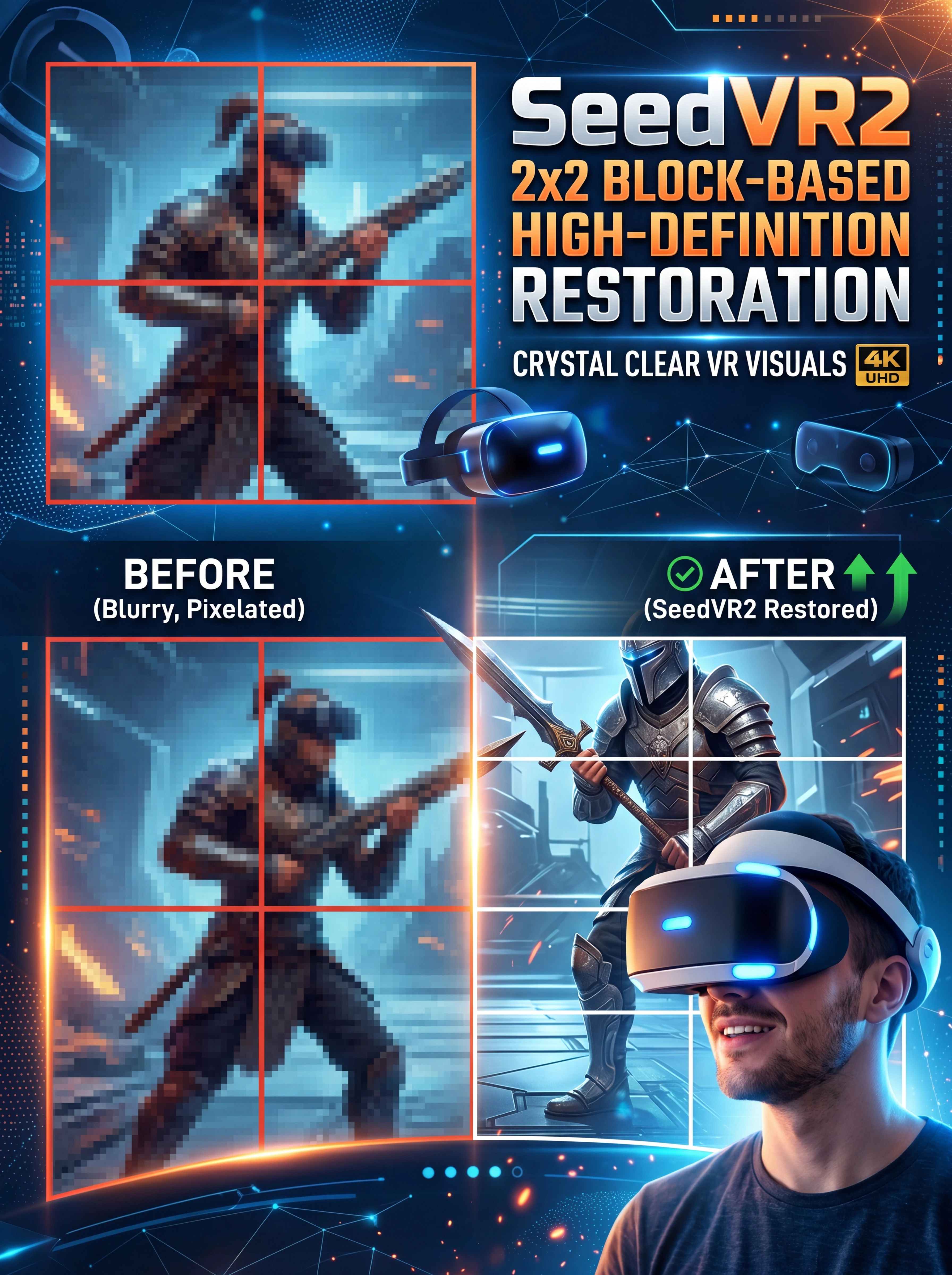

图片处理

创意玩法

重置选择

确定

节点筛选器

内容排序

热门

最新

重置选择

筛选

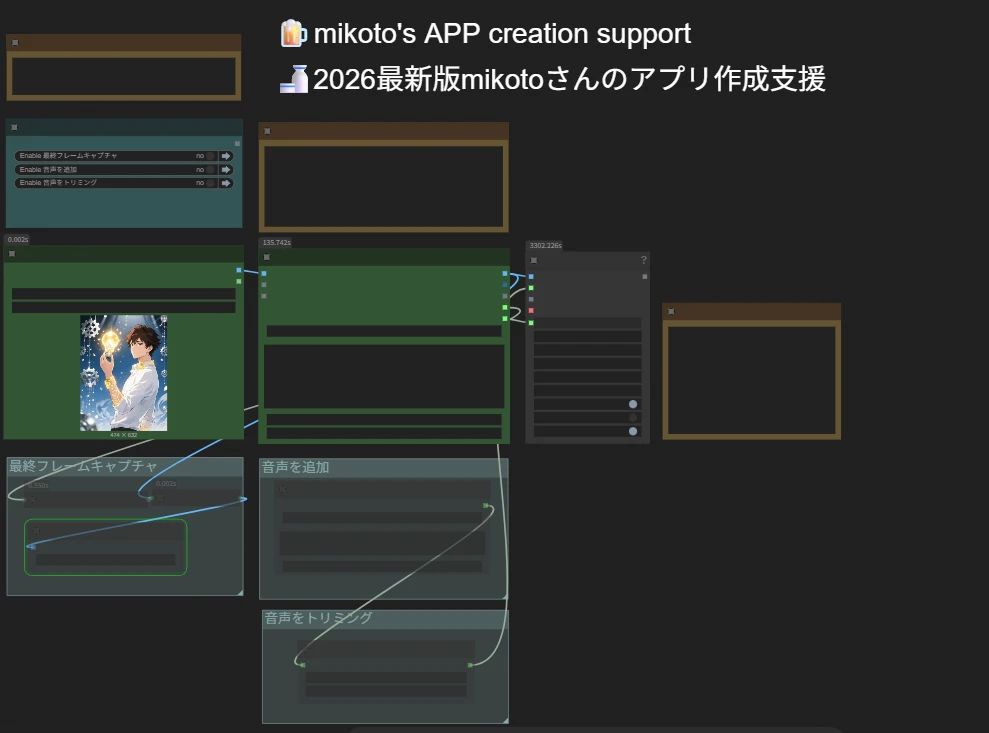

0为什么要选择海艺AI的ComfyUI工作流?

简单的界面

海艺AI提供的直观界面,让你可以轻松配置工作流。即使没有编程经验,你也能驾驭所有工作流。

可自定义的工作流

按你的方式设计工作流。从高级LoRA训练到复杂精细的文生图,每个步骤都可以根据你的需求进行调整。

高效率

海艺优化了AI艺术创作过程,加快渲染时间,减少技术障碍,助你快速生成令人惊叹的视觉效果。

常见问题解答

什么是ComfyUI工作流?

海艺AI的工作流是一种超越简单文本提示词的创新工具。与传统的AI艺术生成器不同,海艺提供了一个可视化的工作流系统,你可以在其中打造自定义的工作流,精确控制图像和视频生成过程。

我能用工作流生成哪些类型的AI艺术?

我们的工作流允许你轻松生成各种类型的AI艺术,包括写实人像、奇幻风景、动漫角色和抽象创作。你可以轻松实现文生图、图生图和图生视频,应用风格变化,甚至还能生成3D模型。

新手可以使用ComfyUI工作流吗?

可以!借助我们易于使用的拖放界面和实时预览,海艺的工作流既适合新手也适合高级用户,让AI艺术创作变得简单无比。

我可以自定义我的工作流吗?

可以,海艺AI提供了多种可自定义的设置,允许你根据具体项目需求调整工作流。